Data Science for Beginners (Part Two Final)

The second introduction to Data Science. This will cover k-nearest neighbors, support vector machines, neural networks, and more.

PS: Please read Data Science for Beginners (Part One) before reading this one. This is a continued version of part one.

Part One: Data Science for Beginners (Part One)

2. Regression Coefficients

Regression coefficients are weights of regression predictors. The regression coefficient will measure how strong a predictor is in the presence of other predictors.

We want to standardize the units of predictor variables before conducting analysis since predictors may end up measuring in different units. We don’t want a predictor to measure in centimeters than another one measured in meters. Standardization would express each variable in terms of percentiles which would cause their coefficients to be beta weights. As a result, This would lead to a more accurate comparison.

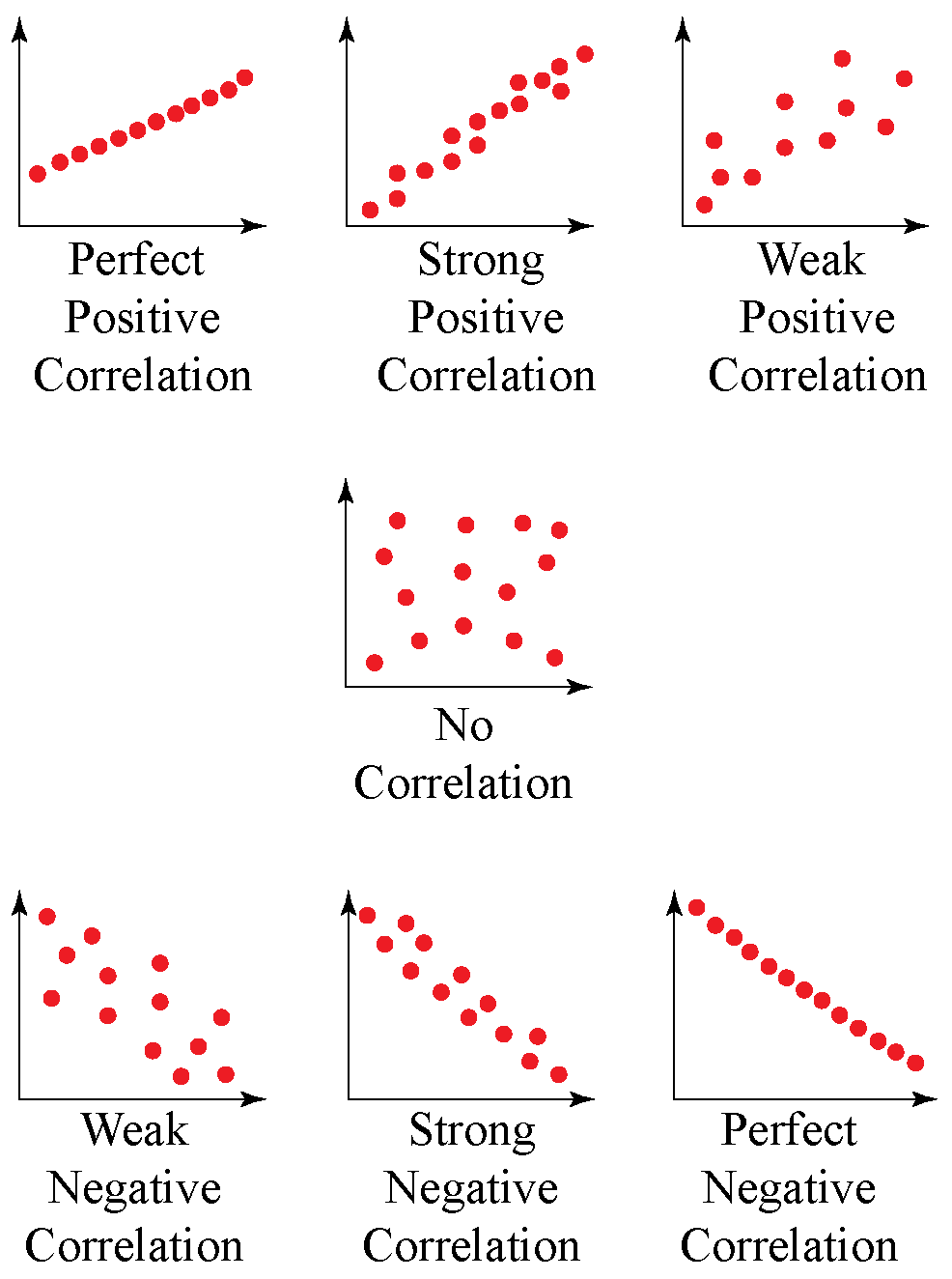

3. Correlation Coefficients

A correlation coefficient is when there is only one predictor in which the beta weight is called the correlation coefficient, denoted as r. Correlation coefficients range from 1 to -1 and provide:

Direction: positive coefficients imply that the predictor moves in the same direction as the outcome; negative is the opposite.

Magnitude: The closer the coefficient is to 1 or -1, the stronger the predictor. A zero means there is no relationship between the outcome and the predictor.

4. Limitations

Regression analysis does have limitations such as:

Sensitive to outliers: having a few points of extreme value can skew the trend line significantly. We can use a scatterplot to identify outliers before analyzing further.

Curved Trends: some trends might be curved, so we got to use alternative algorithms or changed the predictor values.

Distorted Weights: highly-correlated predictors would distort the interpretation of their weights, known as multicollinearity. To solve this, we can exclude correlated predictors or use techniques such as ridge or lasso regression.

k-Nearest Neighbors and Anomaly Detection

k-Nearest Neighbors (k-NN) is an algorithm that classifies a data point based on classification. For example, if a data point is surrounded by five blue points and one red point, then the algorithm will suggest that the data point is most likely blue.

In the example above, k would equal six. Having the right value of k is important as it is critical for prediction accuracy.

If k is too large, it would dilute underlying patterns.

If k is too small, it would amplify errors.

In order to have the best fit, we can tune the parameter k by cross-validation.

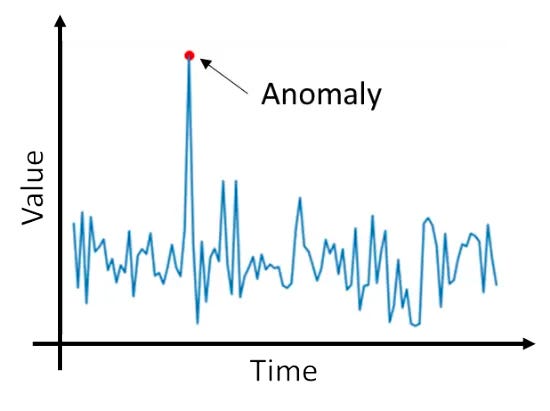

1. Anomaly Detection

Anomaly detection is defined as “examining specific data points and detecting rare occurrences that seem suspicious because they're different from the established pattern of behaviors” from AWS

k-NN can also be used to identify anomalies. It can lead to additional insights, such as discovering predictor that was previously overlooked.

When data is visualized anomaly detection is simple.

2. Limitations:

There are scenarios in which k-NN doesn’t perform well, such as:

Excess Predictors: too many predictors; to solve this, we want to extract only the most powerful predictors

Imbalanced Classes: smaller class might be overshadowed by the larger class. To solve this, we can use weighted voting instead of majority voting, which ensures that classes of closer relations would be weighted more heavily than those further away.

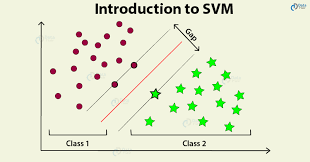

Support Vector Machine

Support vector machines (SVM) are supervised learning models that derive classification boundaries, which can be used to separate data into two groups.

The main goal of SVM is to derive a boundary that separates one group from another.

In order to find the boundary, we have to find peripheral data points that are closest to points from the other group. Then the boundary is drawn down the middle between both groups. There are also support vectors which are data points closest to the boundary line. In the figure above, the two black lines are the support vectors.

1. Limitations

SVM might not work in certain conditions such as:

Small Datasets: small sample means fewer positioning

Multiple Groups: SVM only works with two groups, so if there are more than two groups it wouldn't work. You might have to use multi-class SVM to solve this.

Large Overlap Between Groups: When there is an overlap near the boundary, it might be prone to misclassification.

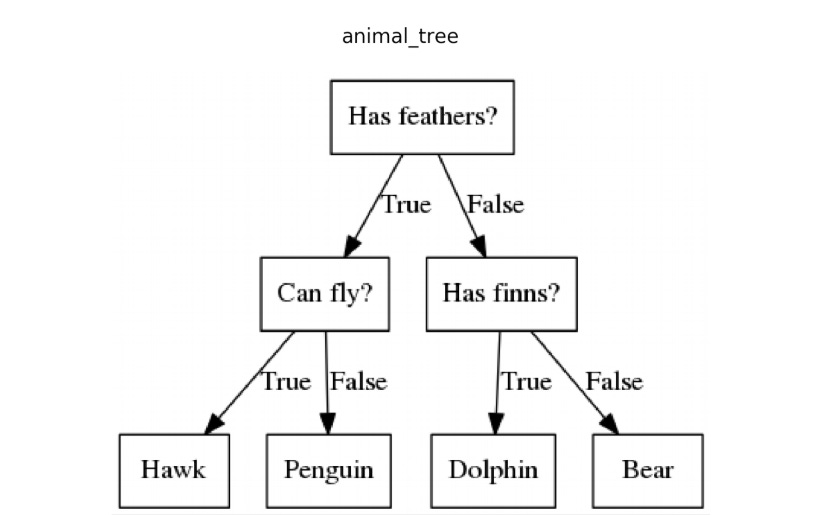

Decision Tree

A decision tree is a model that is shaped like a tree and is used to make decisions and predict possible consequences. The questions are binary questions, so it’s a yes or no question.

If we want to test more options than a yes or no, then we would add more branches further down the tree, but in a standard decision tree, there are only two responses (yes or no).

1. Generate a Decision Tree

You first want to split all the data points into two groups, similar data are grouped together, and then repeat the process with each group. Each leaf node would have fewer data points, but you would get a more homogeneous data point.

This method of splitting data to obtain a homogeneous group is called recursive partitioning. It involves:

Identifying binary questions that best-split data points into two groups that are considered more homogeneous.

Keep repeating step 1 for each leaf node, until the stopping criterion is reached.

Stop criteria can be reached by:

when a leaf contains less than five data points

when further branching doesn’t improve homogeneity

when data points at each leaf are the same value

2. Limitations

Limitations of the decision tree include:

Instability: a change in data could trigger a change in split, which would result in a different tree.

Inaccuracy: Using the best binary questions at the start might not lead to accurate predictions. Less effective splits might sometimes end up with better predictions.

To avoid these limitations, we want to diversify the tree instead of aiming for the best split. Then we can combine each tree and obtain results with the best accuracy and stability.

In order to diversify trees we must:

Choose different combinations of binary questions at random, and then aggregate the prediction from those trees.

If you don’t want to choose at random, we can strategically select binary questions, so that accuracy will improve incrementally. A weighted average of prediction is then taken to obtain the results. This is called gradient boosting.

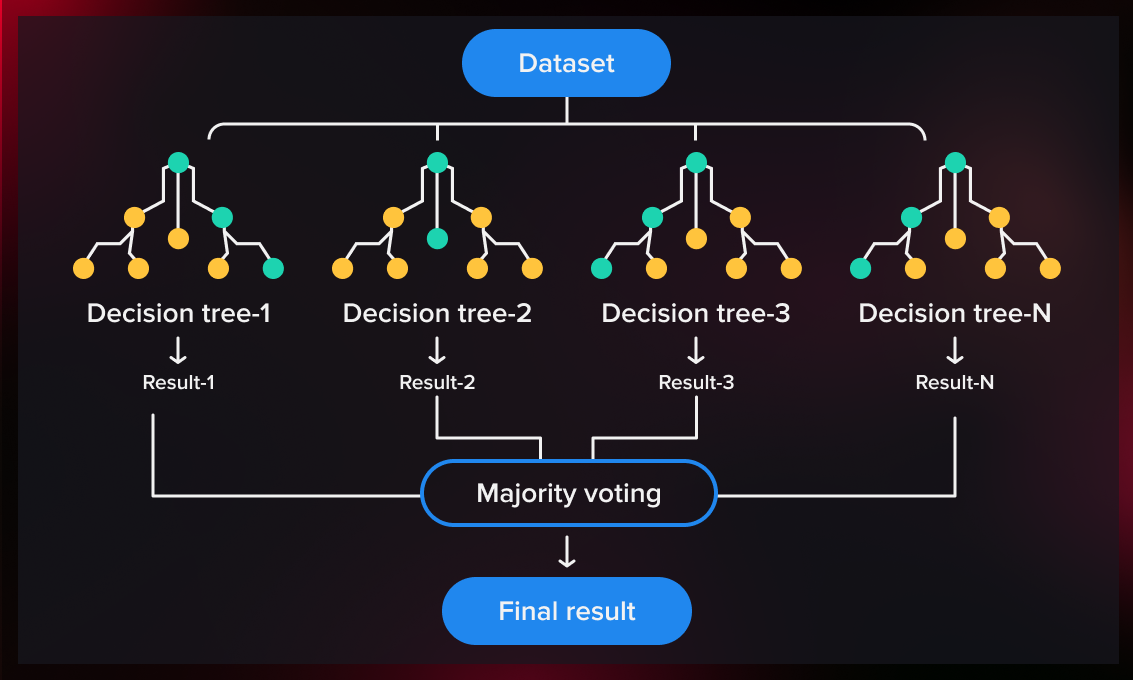

Random Forests

A random forest is combining multiple decision trees with different weaknesses and strengths, which then can yield accurate predictions. This method is called ensembling (combining trees to improve accuracy).

1. Ensembles

A random forest is basically an ensemble of decision trees. By combing trees together, correct predictions would reinforce each other, while errors would cancel each other out.

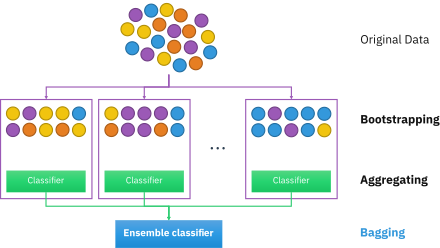

2. Bootstrap Aggregating

Finding the right combination of variables can be difficult, so we would use bootstrap aggregate. We would use bootstrap aggregating to create thousands of trees that are different from each. Each tree is generated from a random subset of the training data. This would allow the tree to be different but still retain certain predictive power.

3. Limitations

It’s difficult to know how a forest model reached its result. This, as a result, could lead to ethical issues.

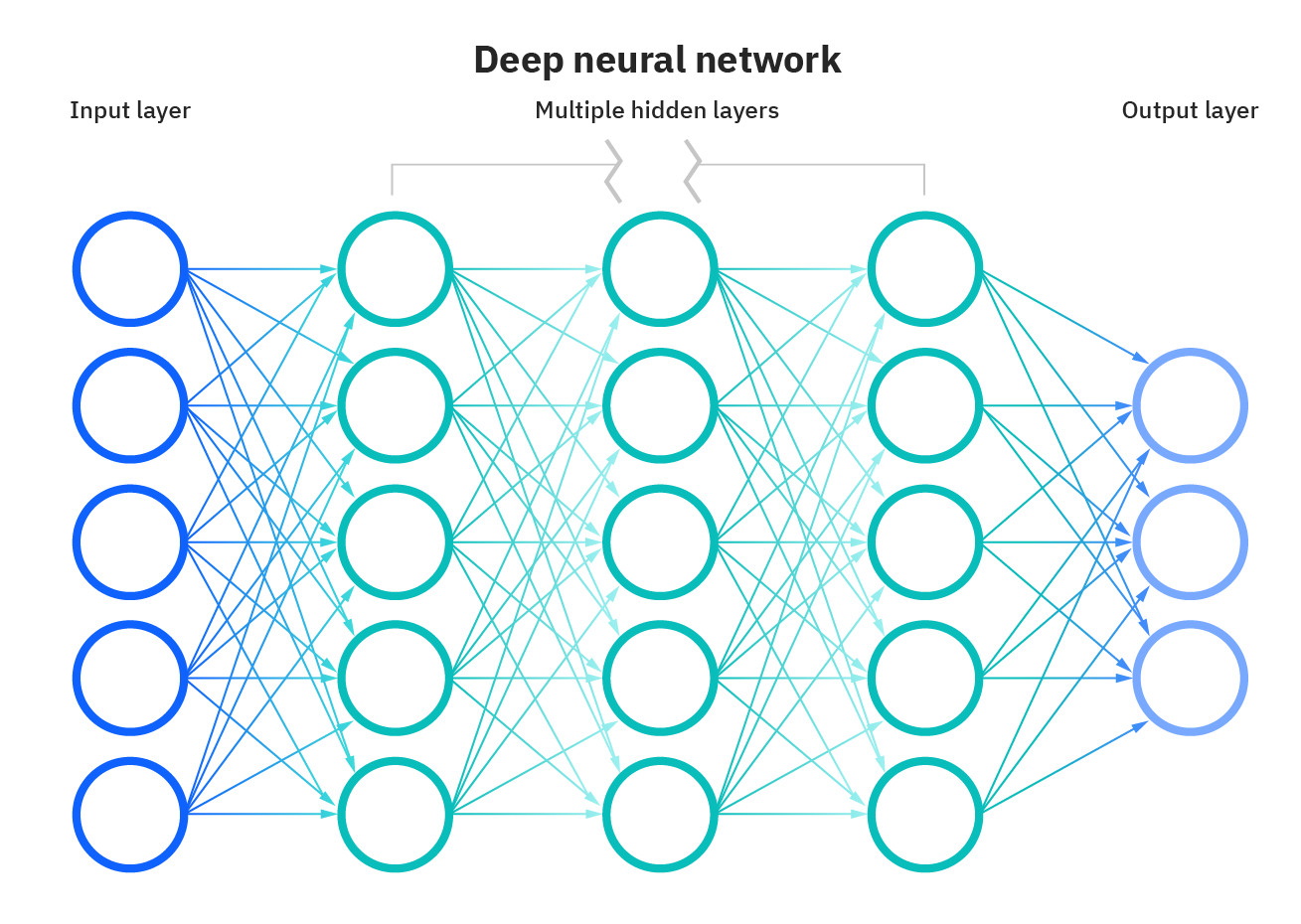

Neural Networks

Neural networks are basically like the neurons in your brain. They work together to convert input signals into corresponding output labels.

Neural Network is popular because of:

Advance Data Storage and Sharing

Better Algorithms

Increased Computing Power

1. Components of Neural Network

A neural network uses multiple layers of neurons in order to process the input for making predictions.

The following components are:

Input Layer: Process data

Hidden Layers: Further processing of the initial data

Output Layer: Final prediction

Loss Layer: Feedback on whether the input has been identified correctly (not shown above)

The loss layer is vital in training neural networks. Feedback from a loss layer would reinforce the pathway that led to that prediction. If an error is made, the neurons along the path can re-calibrate in order to reduce the error. The error must be fed back to the machine in order for neurons to make the correct adjustment. This is called backpropagation.

2. Limitations

Neural networks do have limitations such as:

Large Sample Size Require: need large available data to train on. If the training set is too small, overfitting might happen. To fix this, we can use subsampling, distortions, and dropout.

Expensive: can be quite expensive without the best computing hardware. We could tweak our algorithms to process faster speed with the tradeoff being lower prediction. We can do so by using fully connected layers, mini-batch gradient descent, or stochastic gradient descent.

Not Interpretable: difficult to pinpoint a specific combination of input that will result in the correct prediction. It will make it difficult to use and is referred to as a black box.

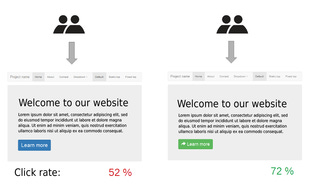

A/B Testing

A/B testing is using two or more versions of variables, then showing those versions to visitors, and determining which one has the biggest impact.

1. Limitations

There are two problems with A/B testing:

Results can be fluke: A bad ad can perform better than a good ad. You can fix this by showing the version to more people, but this would be costly.

Loss of Revenue: By showing the version to more people, we would end up displaying the bad ad to more people, which would lead to us losing buyers who might have been persuaded by the better ad.

Basically, if you show a bad ad to visitors, you’re losing potential customers. The same visitors who have seen the bad ad might have made a purchase had they seen the better ad.

[END]