Data Science for Beginners (Part One)

An introduction to Data Science. This will cover the basics of data, the selection of data, results, and more. Note that this does not contain any math for data science.

Basics

A data science study contains four key steps. First, data must be prepared and processed in order to do analysis. Second, algorithms are shortlisted based on the study’s requirements. Third, parameters have to optimize results and we can do so by tuning them. Last, we select the best models that we’ve generated from the study.

1. Preparation

Data science is about data. In order to generate great results, the data quality must be great. If data quality is poor, then the results will be poor; it doesn’t matter how great you are at analyzing data.

Format

This format is the most used to represent data for analysis. It is called a tabular form and in this case, it’s an excel tabular form. Each column shows a variable that describes the data point. Each row has a data point that is represented by an observation. In this table, the variables are, “Country”, “Salesperson”, “Order Date”, etc. The data points are vertical, such as rows 2-18.

We could change the observations represented by each row, but that depends on what your current objective is.

Types

There are four variable types:

Categorical: When there are two or more options. The two main types are nominal and ordinal.

Binary: When there are only two options. It’s either a true or false, or 0 and 1.

Integer: When the information is represented by a whole number.

Continuous: When the information is represented by decimal places. Consider a more detailed variable.

Selection

Having too many variables might lead to slow computation, or generating the wrong results. This is the reason why we got to get the important variables first. This is often a trial-and-error process since we got to experiment with many variables to determine which one suits us the best.

Missing Data

Having a perfect data set with no missing data isn’t always the case. There will be data missing. Missing data can be handled in the following ways:

Computed: Missing values can be computed using algorithms under supervised learning.

Approximated: If the missing value is considered categorical or binary, it can be replaced with the mode (most common value) of that value. For continuous or integer, we can use the median.

Removed: If all hope is lost, then we can remove the rows with the missing values. Just note that you typically want to do this last as it can reduce the amount of data available. It can also lead to data being skewed away from or toward a group.

2. Selection

We can use different algorithms to analyze our data. The choice of algorithms depends on the task that you want to perform.

Unsupervised Learning

This is used on telling what patterns exist in the data. If you want to find hidden patterns in the dataset, then you would use unsupervised learning. The reason why it’s called unsupervised is that we don’t know what patterns to look for.

Supervised Learning

We would use supervised learning on patterns that will make predictions. If we want to make predictions using patterns, we would use supervised learning. We called them supervised because we want to make predictions based on pre-existing patterns.

If we were to predict continuous or integer values, we would be solving a regression problem. If we were to predict categorical or binary values, we would be solving a classification problem.

Reinforcement Learning

We would use reinforcement learning on making predictions using patterns, but in this case, these predictions would improve as more results come in. Reinforcement learning is all about improving oneself using feedback from results, unlike unsupervised and supervised learning, which is learned and deployed without any further change.

3. Tuning

Parameters are used to tweak an algorithm’s settings. Different parameters have different algorithms. If a model’s parameters aren’t successfully tuned perfectly then it could ruin the accuracy of the model.

Overfitting can happen if the algorithm is sensitive and has mistaken some random variations as patterns.

Underfitting can happen if the algorithm is insensitive and has overlooked underlying patterns.

One way to counter this is by introducing regularization. Penalty parameters are created and they will penalize a model’s increase by artificially inflating prediction error. This will help the algorithm account for accuracy and complexity.

4. Results

When we have finished building a model, we must evaluate it. Evaluation metrics are used. Here are three evaluation metrics that are used commonly.

Classification Metrics

Confusion Matrix: Provides insight into where our model failed or succeeded.

Regression Metric

Root Mean Squared Error: Useful when we want to avoid large errors: each error is squared, which makes the error larger. As a result, it is sensitive to outliers.

Validation

Validation is used to determine how accurate a model is in predicting new data. Instead of having to wait for new data to give us feedback, we can split our dataset into two parts. One part would serve as a training dataset to tune the model, while the second part would serve as a test dataset to assess the accuracy of the prediction.

However, if a dataset is small then we can’t use this method since we may end up sacrificing accuracy when splitting our data.

Cross-validation would solve this problem. Instead of splitting the dataset into two, we can split it into several segments that will be used to test the model.

k-means clustering

Clustering works by identifying common characteristics, which then can be used to group similar things together.

In order to cluster, we must find information. Some examples might include personality traits, income, gender, etc. These examples are used when you want to cluster customers. The purpose of customer clusters is for marketing and advertising since we want to know what the target audience is like before we target them with ads or content.

There can be a lot of clusters as big clusters can be broken down into smaller clusters. We typically want to strike a balance between large and small clusters. The number of clusters should be the amount that we can extract useful patterns from and can be used to help us make decisions, but clusters also have to be small enough in order for clusters to remain distinct.

A scree plot can help you determine the appropriate number of clusters you should have.

We typically want clusters to be at the kink. A kink is a sharp bend that you see in the figure above. The kink will help us see the optimal cluster number to use. In the figure above, 2 and 3 are where the kink is at.

1. Limitations

k-means clustering is useful, but there are some drawbacks such as:

Each data point can only be assigned to one cluster: data point might end up in the middle of the two clusters

Clusters are assumed to be spherical: resulting cluster might end up in a different shape

Clusters are assumed to be discrete: clusters can overlap or nested with one another

A good strategy to limit these drawbacks is by using k-means clustering as a way to gain a simple understanding of the data structure, then you can use advanced methods to mitigate these limitations.

Principal Component Analysis

Principal Component Analysis (PCA) is finding the underlying variable that differentiates your data points.

Standardization is converting variables in terms of percentiles, which changes variables into a standard format. This will allow us to combine them and calculate new variables.

Number of components

We should only select the first few principal components for analysis and visualization in order to keep things generalizable and simple. Principal components are sorted with the first principal component having the most effectiveness. Then after that, the effectiveness would start to decrease.

Use the number of principal components that are corresponding to the kink (large bend in the scree plot).

1. Limitations

Like k-means clustering, the principal component analysis also has limitations:

Maximizing Spread: dimensions with the largest spread of data points aren’t always the most useful.

Interpreting Components: We may struggle with why variables are combined in a certain way.

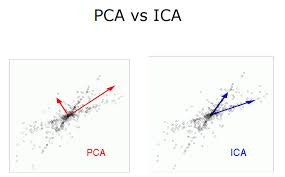

Orthogonal Components: PCA generates components that are positioned 90 degrees from each other. This would be bad if informative dimensions aren’t 90 degrees. To counter this, we can use Independent Component Analysis (ICA).

ICA would allow components to be non-orthogonal, but components can’t overlap in the information they have contained. This would result in unique information on the dataset.

PCA does remain a more popular technique for dimension reduction than ICA. You can use ICA to verify results from PCA.

Association Rules

Association rules are how we can uncover how variables are associated with each other.

1. Confidence, Lift, and Support

Three common measures to identify associations:

Confidence: This helps us indicate how frequency variable Y would appear if variable X is present. One disadvantage of a confidence measure is that it may misrepresent the importance of an association.

Lift: This helps us indicate how frequently variables X and Y would appear together while accounting frequently for each of their own.

Support: This helps us indicate how frequently a variable set appears, measured by the proportion in which the variable set is present.

2. Limitations

Limitations of association rules:

Spurious Associations: associations should be validated in order for associations to be generalizable as associations could happen by chance among a large number of variables.

Computationally Expensive: apriori principle helps reduce the number of variables, but it can still be large if the dataset is large. A solution would be to reduce the number of comparisons using advanced data structures.

Social Network Analysis

Social Network Analysis (SNA) is identifying social structures to find relationships such as how people are related to each other.

The figure above is a graph that is represented by 12 individuals, but we’re only going to focus on Chris, Lynda, Martha, and David. They are each represented by a node. Nodes are the lines that are connected with each other, known as edges. Each edge has a weight, which indicates strength.

From the figure we can tell:

Martha has a strong relationship with Chris and Lynda since the line is more weighted.

David has a strong relationship with Chris.

Chris has a strong relationship with everyone.

Lynda has a strong relationship with Chis and Martha.

NOTE: If there are no lines connecting each other then it means they have no relationship.

SNA can also be used to map networks for other entities.

1. Louvain Method

Clusters will appear in a network if we were to group nodes. Clusters can help us identify how each part of the network differs.

The Louvain method is used to identify clusters in a network. Louvain method will iterate through the following steps:

Treat each node as a single cluster, so there will be many clusters as there are with nodes.

Reassign a node into a cluster that results in the highest improvement in modularity. If we can’t improve it any further, then the node stays put. It will keep repeating for every node until there are no more reassignments.

Build a version of the network representing each cluster found in Step 2 as a single node, and consolidate former inter-cluster edges into weighted edges.

Keep repeating steps 2 and 3 until there are no assignments and consolidation.

There are limitations to the Louvain method such as:

Difficult in identifying the optimal clustering solutions, if the network is overlapping or has nested clusters.

Small clusters may be overlooked.

2. PageRank

This was named after Google’s co-founder Larry Page. PageRank algorithm is used to rank websites. This can be used to rank nodes of any kind and we can use it to identify dominant nodes.

A website’s PageRank is determined by:

Number of links

Strength of links

Source of links

Regression Analysis

Trend lines are generally used for predictions because they are easy to understand and generate. You probably see trend charts such as stock prices or temperature forecasts.

Common trends involve a predictor used to predict an outcome, such as using time (predictor) to predict stock price (outcome). We could improve predictions by adding more predictors.

Regression analysis will allow us to compare the strength of each predictor and improve predictions.

1. Gradient Descent

Gradient Descent is used when parameters cannot be derived directly. Gradient descent will make a guess on a set of suitable weights and then tweak these weights in order to reduce prediction error. This is done before starting an iterative process of applying weights to every data point in order to make a prediction.

In the figure above, the lowest point is where prediction error is minimized.

Pits can happen as you can see in the figure above. The gradient descent algorithm might mistake that as the minimum point.

To reduce the risk of the pit, we can use stochastic gradient descent. Stochastic gradient descent is where we reference only one data point instead of using every data point to adjust the parameters in each iteration. This would introduce variability which would allow the algorithm to escape from the pit.

[End of Part One]