What is Bayesian Statistics? The Beginner Math Guide (Part Four Final)

When more data is bad 😱😱😨

Bayes Factor

Let’s revisit Bayes’ theorem:

P(A | B) is the posterior probability which tells us how strongly we believe in our hypothesis

P(A) is the prior belief, or the probability of our hypothesis prior to looking at the data

P(B | A) is the likelihood of getting the existing data if our hypothesis is true

P(B) is the probability of data observed independent of the hypothesis.

P(B) is hard to define and sometimes it’s hard to figure out the probability of our data.

Bayes Factor

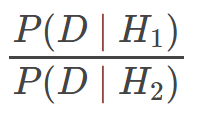

This is the Bayes factor:

It is the ratio between the likelihoods of two hypotheses. What this ratio tells us is the likelihood of what we have seen given what we believe to be true compared to what someone else believes is true. Our hypothesis wins if it best explains the world better than the competing hypothesis.

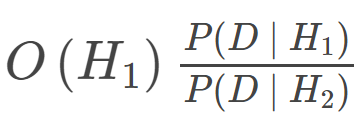

We would also need to combine the Bayes factor and the prior odds (written as O(H_1):

This would help us calculate how times better our hypothesis can explain the data than a competing hypothesis.

Example

Suppose that you were walking and all of a sudden there is a pain in your chest and a pain in your neck. So you decided to google for potential causes of your symptoms and you have come to two possible hypotheses:

Heartburn: When stomach acid backs up into your esophagus. You can avoid lying down and use medications to ease the symptoms.

Heart Attack: “A blockage of blood flow to the heart muscle.” Most likely require you to go to the hospital.

Of the two, heart attack is the most worrying. Yeah, it could be just heartburn and nothing else, but what if it’s not? Because you are most worried about the possibility of having a heart attack, you decide to make this your H_1. Your H_2 is the hypothesis that you have heartburn.

We’ll start by looking at the likelihood of these hypotheses and compute P(D | H). You have observed two symptoms: pain in the chest and pain in the neck.

The following data numbers are made-up.

For heart attacks, the probability of experiencing chest pain is 97 percent, and the probability of experiencing neck pain is 76 percent. So the probability of having both is:

We’ll do the same for H_2. For heartburn, the probability of experiencing chest pain is 89 percent, and the probability of neck pain is 41 percent, which means the probability of having both chest pain and neck pain is:

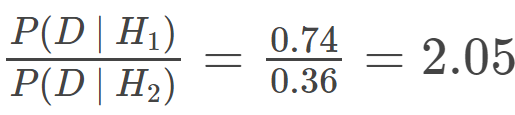

So we can now implement it into our Bayes factor:

Well just doing the Bayes factor wouldn’t help us much, so next, we need to determine the prior odds of each hypothesis.

So we need to find out how likely it is for someone to have one issue than the other.

By doing some research on heart attacks, we have found that there are 805,000 cases in 331,900,000 people. The prior odds would look like this:

For heartburn, we have found that there are 60,000,000 cases in 331,900,000 people. So it would look like this:

To get the prior foods for H_1, we need to look at the ratio of these two prior probabilities:

Based on this, a person is about 74 times more likely to have heartburn than a heart attack (12000 / 161). But we do need to compute the full posterior odds, and we can do so by multiplying our Bayes factor by our prior odds:

These results show that H_2 is about 36 times more likely than H_1 (6250000 / 171901). So you can relax knowing that you only had heartburn rather than a heart attack.

Data doesn’t convince

There are times when data doesn’t convince people in the way we expect them to.

Dice

Suppose that your friend can predict the outcome of a six-sided die roll with 80 percent accuracy. So you set up a hypothesis test to find out if what he is saying is true:

The first hypothesis, H_1, represents your belief that the die is fair, and that your friend can’t predict the outcome.

The second hypothesis, H_2, represents your friend’s belief that they can predict 80% of all outcomes, so we write an 8/10 ratio.

Next, we need some data, so let’s pretend that your friend rolls the die 10 times and he correctly guesses the outcome 8 times. So here are the results that your friend guess correctly using the Bayes factor:

Our likelihood ratio shows that your friend's hypothesis explains the data 16,231 better than the hypothesis that your friend is just lucky that he correctly guess 8 out of the 10 outcomes. This is pretty strange since this means that H_2 is correct and your friend can guess 8 out of 10 outcomes.

Using Prior Odds

I don’t believe that my friend can guess 8 out of 10 outcomes, so I’m going to create strong prior odds in favor of the first hypothesis. We can set our odds ratio high, so it can cancel out the extreme result of the Bayes factor:

Now we can work out our full posterior odds:

Well…we found out that once again, your friend can predict 8 out of 10 outcomes.

But let’s say that your friend rolls the die five more times, and has predicted all five outcomes. So we have a new set of data, D_15, which represents 15 rolls, 13 being correctly guessed:

Now we have a posterior odds of 2,548 which means that your friend can actually predict 8 out of 10 outcomes.

This is really strange since a 15-roll with 13 successful guesses is highly unusual. We can’t rely on our test to solve statistical problems if we can’t explain what’s going on with our hypothesis test.

Alternative Hypotheses

The issue is that we don’t want to believe that your friend can predict 8 out of 10 outcomes. So you might have an alternative conclusion that your friend is cheating with a special die that can roll a certain value 80% of the time.

We’ll start by comparing H_2 to our new hypothesis, H_3. Also, we’ll start with new prior odds about H_2. Let’s just say that 1000 is our new prior odds and that we believe 1000 times that our friend is cheating with a special die. This would give us 1/1000 as our prior odds, so we’ll get the following results:

According to the results, it is the same as our prior odds. This happens because our likelihoods are the same (P(D_15 | H_2) = P(D_15 | H_3)). So for both hypotheses, the likelihood of your friend guessing 8 out of 10 outcomes is the same for the special die because the probability for each is the same.

No amount of data can change our minds about believing H_3 over H_2 because they both explain it well, so in summary, no amount of data can convince you that your friend is cheating with a special die. If we have more data, then it will make us more certain that your friend can predict 8 out of 10 outcomes.

Normally, this is bad when it comes to conspiracy theories. If the hypothesis isn’t falsifiable, then having more data will make us more certain that the conspiracy is true.

This is why it’s important to change your prior beliefs when solving a problem. If you don’t want to change your beliefs then you’re no longer reasoning in a Bayesian way.

[End]