A General Overview of Statistics Used in Data Science (Part Two)

I need a certain degree of freedom to write this.

Correlation

Exploring data involves exploring correlations among predictors, and between their target variables.

Some terms:

Correlation coefficient: measures which numeric variables are associated with one another (range from -1 (perfect negative correlation) to +1 (perfect positive correlation)). This will give us an estimate of the correlation between them. It is sensitive to outliers. A correlation coefficient of zero indicates there is no correlation.

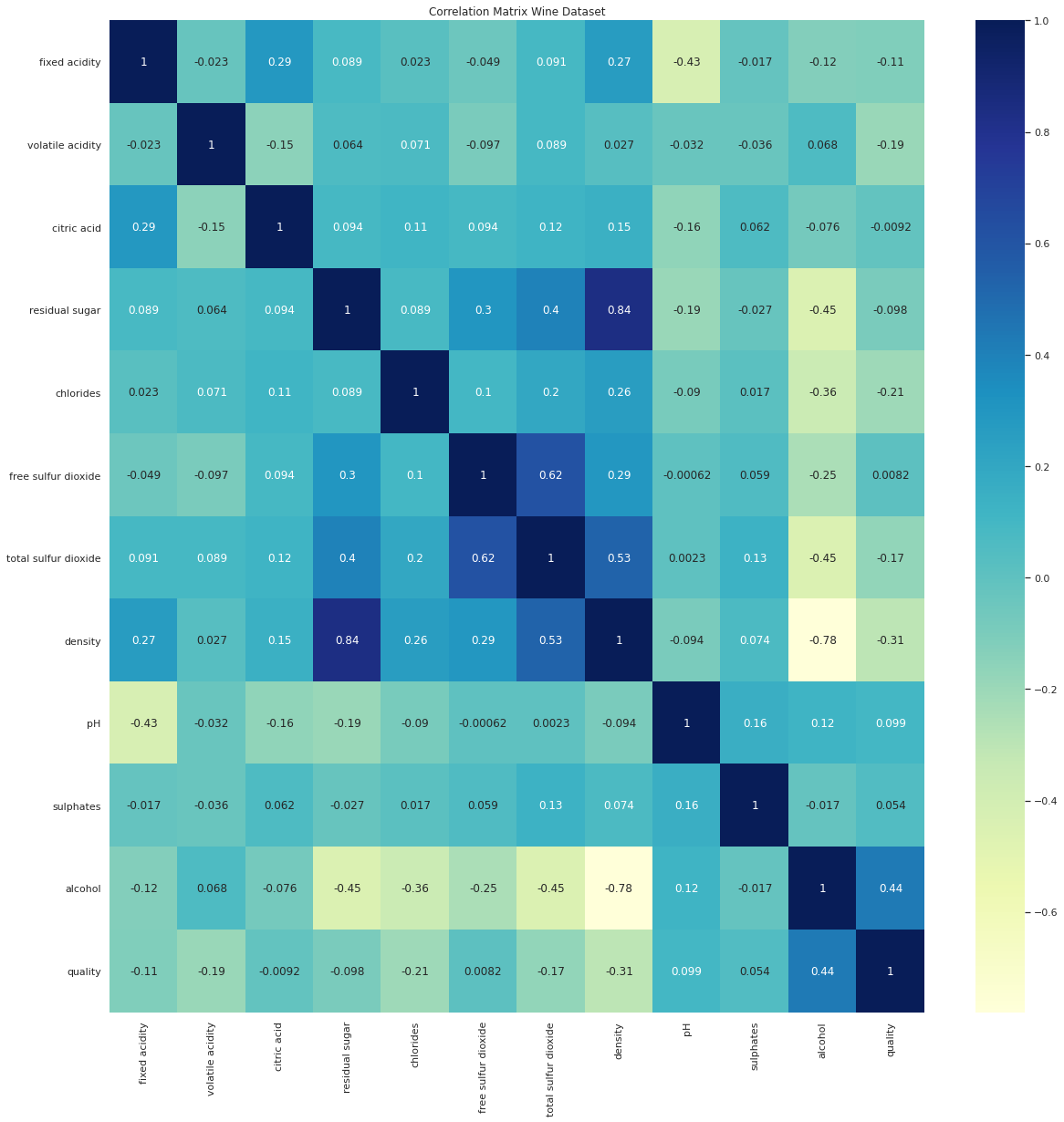

Correlation matrix: a table that displays the correlation coefficient. Variables are shown in both columns and rows. The darker squares correspond to stronger relationships.

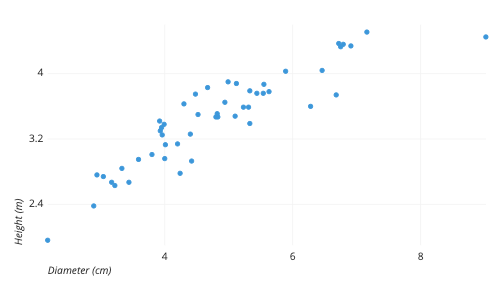

Scatterplot: A plot that shows a relationship between two measured variables.

Two or More Variables

Some terms:

Contingency table: tally count between two or more categorical variables

Hexagonal binning: plot of two numeric variables with records that are binned into hexagons

Contour plot: plot showing the density of two numeric variables

Violin plot: like boxplot but it shows density estimate. It will plot the density on the y-axis. The density is mirrored and flipped over, which resembles a violin. The violin plot shows nuances in the distribution which can’t be seen in the boxplot. Boxplot, however, can show outliers more clearly than violin plots.

The most important step in data analysis is to look at the data. We can get a lot of insight by visualizing and summarizing the data.

Sampling and Data Distributions

Some terms:

Sample: a subset from a large data set

Population: the large data set

N (n): the size of the population (sample)

Random sampling: elements chosen at random. Each member of a population has an equal chance of being chosen for a sample at each draw.

Stratified sampling: Dividing the population into strata and then each strata is randomly sampled

Stratum (pl., strata): a homogenous subgroup of the population with common characteristics

Simple random sample: the sample chosen from random sampling. This is basically the result of random sampling.

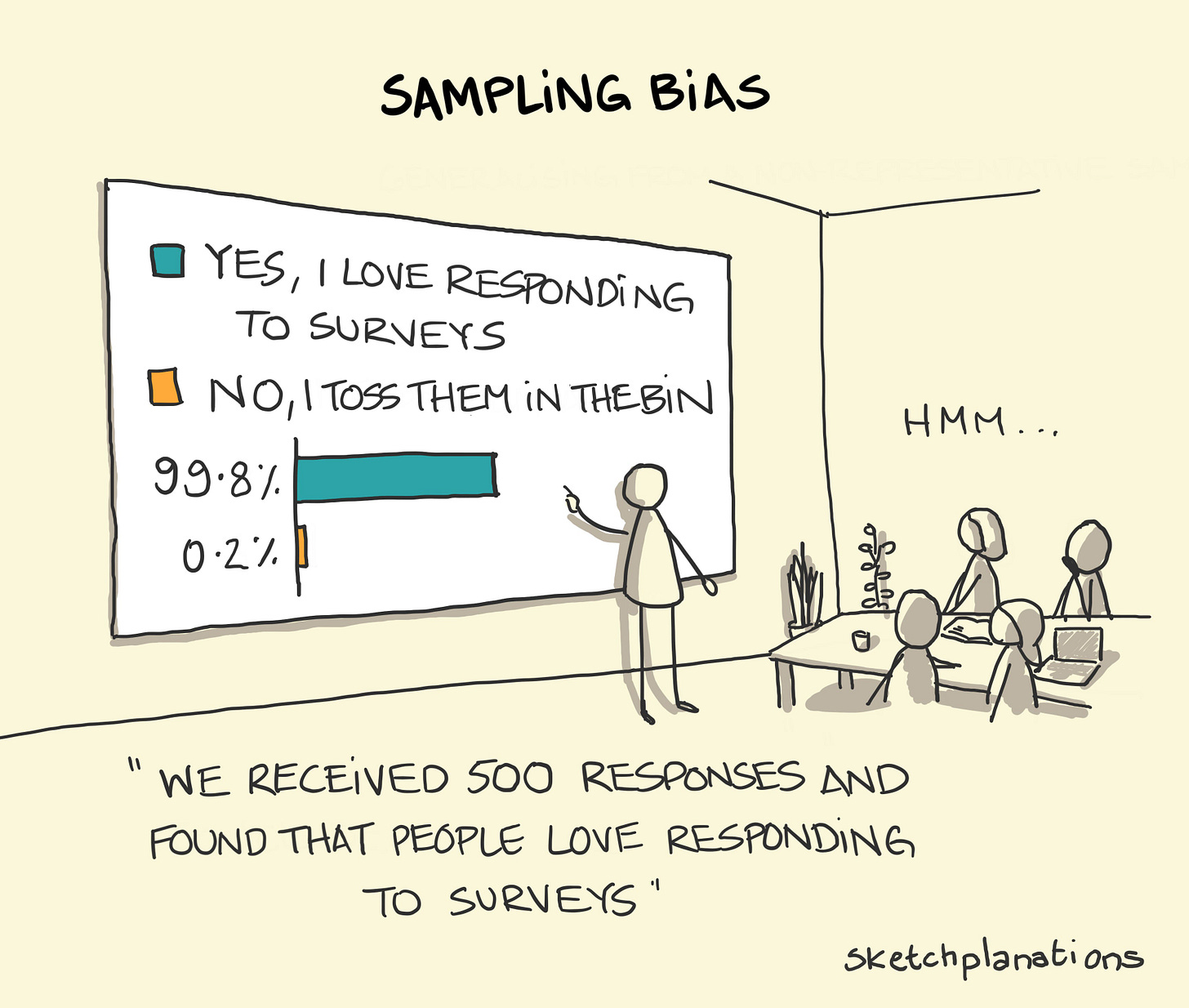

Bias: Systematic error. There should be a distinction made between errors due to bias and errors due to random chance. Bias can come in many different forms, and it may also be invisible.

Sample bias: a sample that misinterprets the population. It basically means the sample is different from the larger population that it is meant to represent.

Random sampling is what will help us reduce bias and improve the quality of the data. Just remember that data quality is often more important than data quantity.

Sample Mean and Population Mean

The symbol x̄ is used to represent the mean of a sample from a population. The symbol μ is used to represent the population mean.

Selection Bias

Selection bias is selectively choosing data that leads to outcomes that are misleading.

Some terms:

Selection bias: Bias from the way we select observations

Data snooping: Searching through data until something interesting happens.

Regression to the Mean

Regression to the mean is where extreme observations will be followed by central ones. It can lead to selection bias.

Specifying a hypothesis and collecting data through randomization and random sampling can reduce bias.

Repeated running of models in data mining and data snooping can increase bias.

Sampling Distribution

Some terms:

Sample statistic: metric calculated for sample data from a large population

Data distribution: frequency distributions of values in a data set

Sampling distribution: frequency distribution of sample statistics over many samples

Central limit theorem: sample distribution will tend to take on a normal shape as sample size increase. The mean drawn from multiple samples will resemble a bell-shaped curve.

Standard error: the standard deviation of a sample statistic over many samples. As the sample size increase, the standard error decreases.

There is a difference between the distributions of individual data points (data distribution) and the distributions of a sample statistic (sampling distribution).

Standard deviation and standard error are different. Standard deviation measures the variability of individual data points while standard error measures the variability of sample metrics.

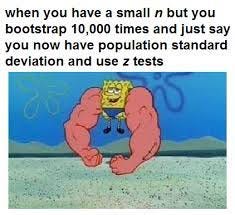

Bootstrap

One easy way to estimate the sampling distribution of a statistic is to draw more samples, from the sample itself and recalculate the statistic for each resample. This is called bootstrap.

Some terms:

Bootstrap sample: sample taken with replacement from an observed data set

Resampling: Process of taking repeated samples from observed data

Here is the method for bootstrap resampling:

Draw a sample value, record it, and then replace it

Repeat n times

Record mean of n resampled values

Repeat steps 1-3 R times

Use R to:

a. Calculate standard deviation

b. Produce histogram/boxplot

c. Find confidence interval

All bootstrap does is inform us about how lots of additional samples would behave.

Bootstrap is a good tool for assessing the variability of a sample statistic.

Confidence Intervals

Some terms:

Confidence level: percentage of confidence intervals that are expected to contain statistics of interest. They are typically used to present estimates as interval ranges.

Interval endpoints: Top and bottom of the confidence interval

Confidence intervals are expressed as a percentage, such as 90% or 95%. The percentage associated with confidence intervals is called the level of confidence. The higher the level of confidence, the wider the interval. Also if the sample is smaller, then the interval will be wider.

The lower the level of confidence, the narrower the confidence interval will be.

Bootstrap is a good way to construct confidence intervals.

A confidence interval can be used to get an idea of how variable a sample result might be. A data scientist would then use the information to find a potential error in the estimate or to find if a larger sample is needed or not.

Normal Distribution

Bell-shaped distribution is pretty iconic in statistics.

Some terms:

Error: the difference between predicted or average value and data point

Standardize: subtract mean and divide by standard deviation

z-score: the result of standardizing an individual data point

Standard normal: a normal distribution who have a standard deviation equal to one and a mean equal to zero

QQ-Plot: A plot comparing two distribution

To convert data into a z-score, subtract the mean of the data and then divide by the standard deviation, and then you can compare the data to the normal distribution.

Long-Tailed Distributions

Most data is not normally distributed.

Some terms:

Tail: where extreme values occur at low frequency

Skew: where one tail of a distribution is longer than the other

Sometimes, the distribution is highly skewed (asymmetric) or can be discrete.

t-Distribution

t- distribution is used in depicting distributions of sample statistics. The larger the sample, the more normally shaped the t-distribution is.

Some terms:

n: sample size

Degrees of freedom: the number of values used to calculate a statistic

t-distribution is used as a reference basis for the distribution of sample means, regression parameters, and more.

Binomial distribution

The binomial distribution is the frequency distribution of the number of successes in a given number of trials.

Some terms:

Trial: an event with a discrete outcome

Success: outcome of interest for a trial

Binomial: having two outcomes

Binomial trial: a trial with two outcomes

Binomial distribution: distribution of number of successes

Binomial outcomes are important to model.

Poisson and related distributions

The Poisson distribution will tell us the distribution of events in a fixed interval of time/space. The key parameter in Poisson distribution is lambda.

Some terms:

Lambda: the rate at which events happen. The mean number of events that occur in a specified interval of space/time

Poisson distribution: frequency distribution of the number of events in a fixed interval of time or space

Exponential distribution: frequency distribution of time or distance from one event to the next event

Weibull distribution: event rate is allowed to shift over time

You can model as a Poisson distribution for events that occur at a constant rate, and the number of events in a fixed interval of space/time.

You can model as an exponential distribution for modeling time or distance between one event to the next.

You can model it as a Weibull distribution for a changing event rate over time.

Experiments and Testing

The goal of an experiment is to confirm or reject a hypothesis.

A/B Testing

The A/B test is an experiment where two groups are tested to find out which one performs better.

Some terms:

Treatment: Something to which a subject is exposed

Treatment group: A group of subjects exposed to a specific treatment

Control group: A group of subjects does not receive treatment

Randomization: Randomly assigning subjects to treatments

Subjects: The groups exposed to treatment

Test statistic: use to measure the effectiveness of the treatment

A/B tests are commonly used in marketing or web design. Some examples of A/B testing include:

Testing two web pages to determine which produces the most clicks

Testing two ads to find out which one converts the most users

Testing two prices to find out which one yields the most profit

Proper A/B tests have subjects that can be assigned to one treatment or another. It’s best if the subjects are randomized to the treatments.

Hypothesis Test

Some terms:

Null hypothesis: No relationship between variables. In a nutshell, it basically means nothing special has happened.

Alternative hypothesis: What you hope to prove

Resampling

Resampling is to repeatedly draw sample values from observed data with the goal to improve the accuracy of some machine learning models.

Some terms:

Permutation test: Procedure of combining two or more samples together and reallocating the observations to resamples. Basically, multiple samples are combined and shuffled.

Resampling: Drawing more samples from the observed dataset

Here are the steps of the permutation test:

Combine all results from different groups into one data set

Shuffled the data and then randomly draw a resample. Should be the same size as group A

Do it again for groups B, C, and D

Calculate estimate or statistic for the resamples

Repeat the previous steps R times to yield a permutation distribution

You can now go back and compare the observed difference and permuted differences.

p-Values and Statistical Significance

Statistical significance is used to measure if an experiment would yield a result more extreme or whether the result is likely from chance.

Some terms:

p-value: the probability of obtaining results as extreme as the observed results.

Alpha: probability threshold used to determine if results might suppress the outcome to be deemed significant. The typical threshold is 5% and 1%.

Type 1 error: Rejecting the null hypothesis when the hypothesis is actually true

Type 2 error: Not rejecting the hypothesis when the hypothesis is actually false

t-Tests

Some terms:

Test statistic: a metric for the difference in interest

t-statistic: used to determine if you should reject the null hypothesis

t-distribution: used for estimating population parameters for small sample sizes

Multiple Testing

Some terms:

False discovery rate: rate of making Type 1 errors

Alpha inflation: the more statistical test done, the probability of making Type 1 errors will increase

Adjustment of p-values: doing multiple tests on the same data

Overfitting: fitting the noise

Multiplicity can increase the risk of concluding that something is significant if you’re doing a data mining project. You can use a holdout sample with labeled outcome variables to help avoid misleading results.

Degrees of Freedom

Degrees of freedom refer to the number of values free to vary.

Some terms:

sample size: number of observations in the data

d.f.: degrees of freedom

ANOVA

ANOVA is a procedure for analyzing results from experiments with multiple groups.

Some terms:

Pairwise comparison: hypothesis test between two groups

Omnibus test: single hypothesis test of the overall variance

Decomposition of variance: Separation of components

F-statistic: used to find out if the mean between two populations is significantly different or not. The higher the ratio, the more statistically significant the result.

SS: Sum of Squares

Chi-Square Test

The chi-square test is used to test how well data fits some expected distribution. You would use this if you want to know whether the data might be due to chance. The role is to establish statistical significance.

Some terms:

Expectation or expected: How we expect the data to turn out

The formula:

Multi-Arm Bandit Algorithm

Multi-arm bandits allow for more explicit optimization and rapid decision-making. In short, it allows for multiple treatments at once.

Some terms:

Multi-arm bandit: customers must choose between multiple options. It’s like a slot machine with multiple arms

Arm: treatment in experiment

Win: experimental analog of win

Sample size and power

Some terms:

Effect size: the minimum size that you hope to detect

Power: the probability of detecting a given effect size with a given sample size

Significance level: the level at which the test is conducted

In short for calculating power you’ll need:

Sample size (find out how big a sample size is needed)

Effect size (the minimum size that you want to detect)

Significance level (alpha) (the level at which the test will be conducted)

Power (require probability of detecting effect size)

[End of Part Two]

![Contour plot in R [contour and filled.contour functions] | R CHARTS Contour plot in R [contour and filled.contour functions] | R CHARTS](https://substackcdn.com/image/fetch/$s_!_pQk!,w_1456,c_limit,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2F481bf75f-a751-464b-93ac-628264a014c5_960x806.png)