A General Overview of Statistics Used in Data Science (Part Three Final)

I went traveling and now I'm lost at a random forest

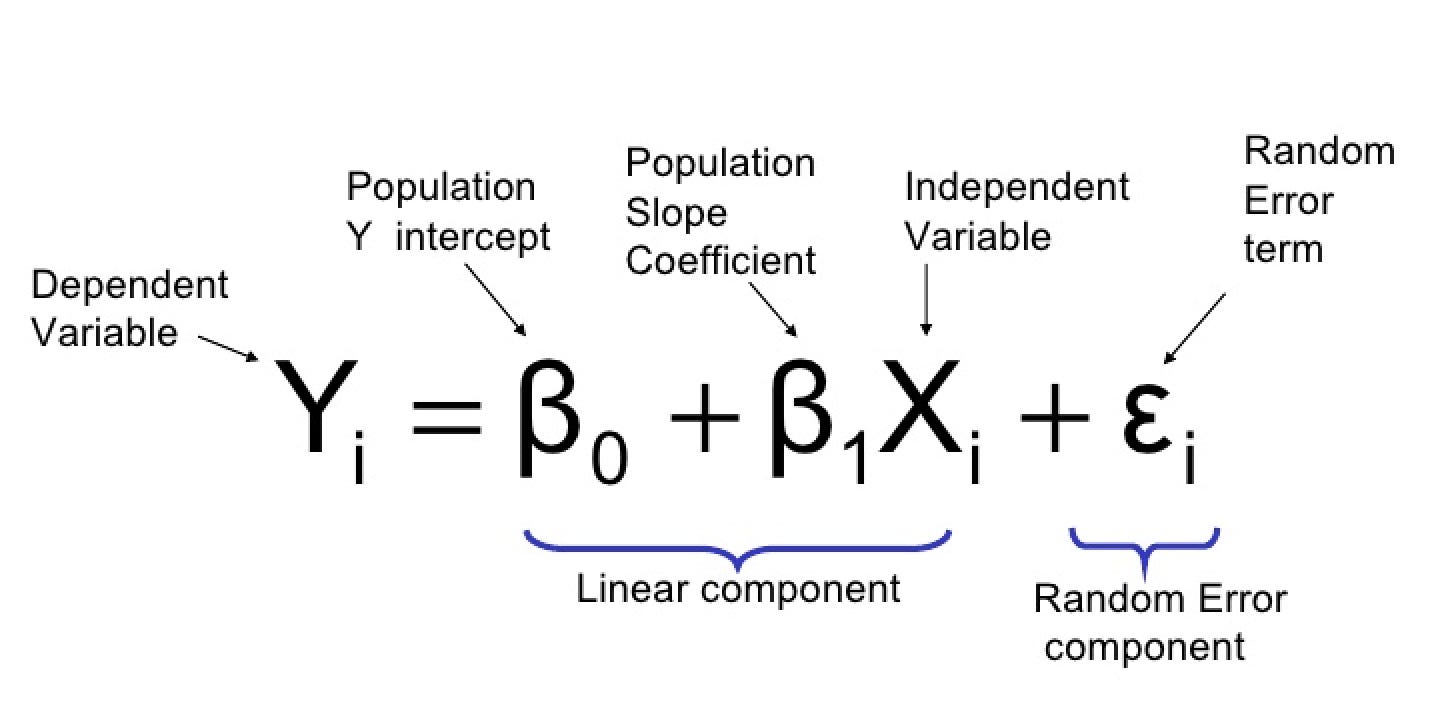

Simple Linear Regression

Linear regression provides the model for the relationship between one variable and another variable.

Some terms:

Response: variable we’re trying to predict (dependent variable, target, outcome)

Independent variable: variable used to predict the response (feature, attribute)

Record: vector of predictor and outcome values for a specific individual (row, case)

Intercept: intercept of the regression line, when predicted value X = 0

Regression coefficient: the slope of the regression line (weights)

Fitted values: estimates y ^ i obtained from the regression line (predicted values)

Residuals: the difference between fitted value and observed values (errors)

Least squares: method of fitting regression by minimizing the sum of squared residuals (ordinary least squares)

The regression equation:

Linear regression estimates how much Y will change when X is changed. We’re trying to predict the Y variable from X.

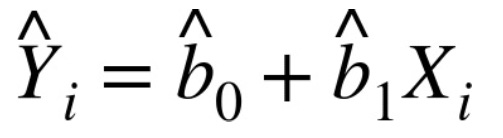

The fitted value equation:

Regression is used to form a model to predict outcomes for new data. The goal is to understand the relationship.

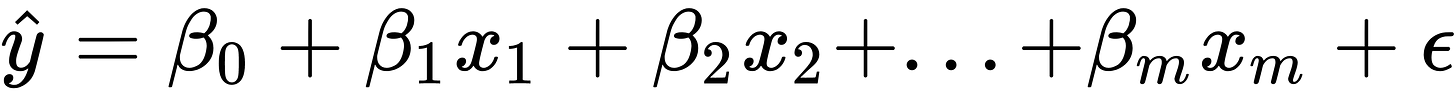

Multiple Linear Regression

When there are multiple predictors, the equation is extended:

Some terms:

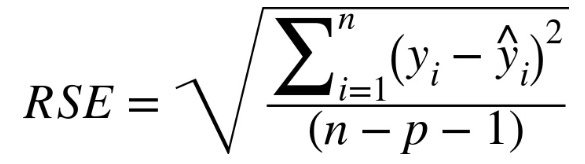

Root mean squared error: square root of average squared error of the regression

Residual standard error: same as root mean squared error, but adjusted for degrees of freedom

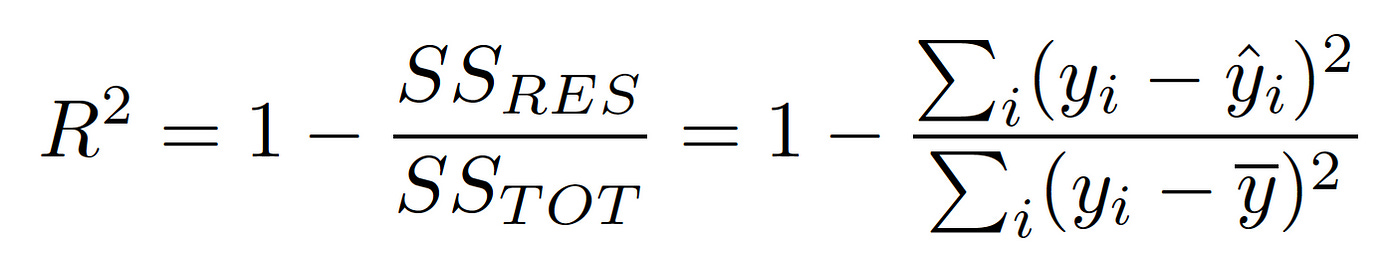

R-squared: Proportion of variance explained from 0 to 1

t-statistic: coefficient for predictor, divided by the standard error

Weighted regression: regression with records having different weights (give records more or less weight in fitting the equation). Important for analysis of complex surveys.

The most important performance metric is root mean squared error:

This would measure the overall accuracy of the model. Similar to RMSD is RSE (residual standard error):

The only difference is that the denominator is degrees of freedom.

Another useful metric you’ll see is the R-squared statistic, also called the coefficient of determination:

It is used to measure the proportion of variance in the data. Mainly used for how well the model fits the data.

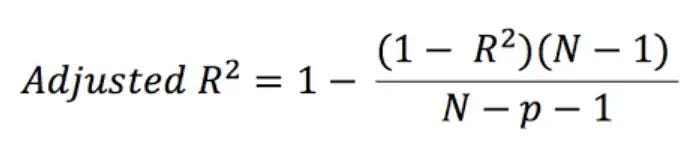

One way to include model complexity is to use adjusted R^2:

Here N is the number of records and p is the number of variables in the model.

Prediction Using Regression

The primary purpose of regression is prediction (well in data science that is).

Some terms:

Prediction interval: uncertainty interval around the predicted value

Extrapolation: extension of the model to estimate unknown values

Regression should not be used to extrapolate beyond the range of data. Using extrapolation could lead to error.

Factor Variables

Factor variables or categorical variables take on a limited number of discrete values.

Some terms:

Dummy variables: binary 0-1 variables to represent subgroups

Reference coding: one level of the factor is used as a reference and other factors are compared (treatment coding)

One hot encoder: all factor levels are retained (not appropriate for multiple linear regression)

Deviation coding: Compares each level against the overall mean (sum contrasts)

Fator variables have to be converted into numeric variables in order to be used in the regression.

Most common method to encode factor variables with P distinct values is to use P - 1 dummy variables.

A factor variable with many levels needs to be consolidated with fewer levels.

Interpreting Regression Equation

Some terms:

Correlated variables: any relationship between two random variables

Multicollinearity: when predictor variables have perfect correlation, making the regression unstable to compute (redundancy among predictor variables)

Confounding variables: variable that influences both dependent and independent variable leading to a spurious relationship.

Main effect: the relationship between outcome and predictor variable, independent of other variables

Interactions: the interdependent relationship between two or more predictors

Multicollinearity happens when:

a variable is included multiple times, usually by error

P dummies, instead of P - 1 dummies

Two variables perfectly correlated with each other

Multicollinearity must be addressed in regression.

Regression Diagnostics

Some terms:

Standardized residuals: residuals divided by the standard error of residuals

Outliers: records that are far away from the rest of the data

Influential value: value whose absence makes a big difference in the regression equation

Leverage: degree of influence a record has on the regression equation

Heteroskedasticity: when the range of outcome experiences residuals with higher variance (having heteroskedasticity may indicate an incomplete model)

Partial residual plots: a plot to show the relationship between a single predictor and outcome variable (added variables plot)

Extreme values are called outliers. You can detect outliers by using standardized residuals. The interest of outliers is to identify problems with the data.

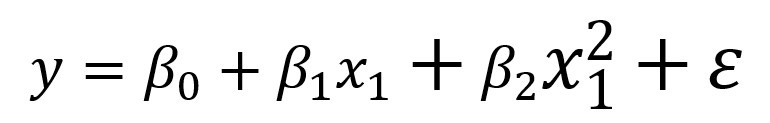

Polynominal/Spline Regression

Some terms:

Polynomial regression: adds polynomial terms to the regression

Spline regression: non-linear regression

Knots: values that separate spline segments

Generalized additive models: spline model with an automated selection of knots

Native Bayes

Some terms:

Conditional probability: the probability of observing some event given some other event

Posterior probability: the probability of outcome after predictor information has been incorporated

To apply naive Bayes to numerical predictors:

Use a probability model, such as normal distribution, to estimate conditional probability.

Naive Bayes works with categorical predictors and outcomes.

Discriminant analysis

Some term:

Covariance: measure to which one variable varies in concert with another

Discriminant function: maximizes the separation of the classes

Discriminant weights: used to estimate probabilities of belonging to one class or another

The covariance measures the relationship between x and y.

Discriminant analysis works with categorical predictors, categorical outcomes, and continuous predictors.

The covariance matrix calculates the linear discriminant function which is used to distinguish records belonging to one class from those belonging to another class.

Logistic regression

Logistic regression is similar to multiple linear regression, but the outcome is binary.

Some terms:

Logit: a function that represents probability to a range from negative infinity to infinity.

Odds: ratio of ‘success’ (1) to ‘not success’ (0)

Log odds: response in the transformed model, which returns back to probability (after the linear model is fitted, log odds is returned back to a probability)

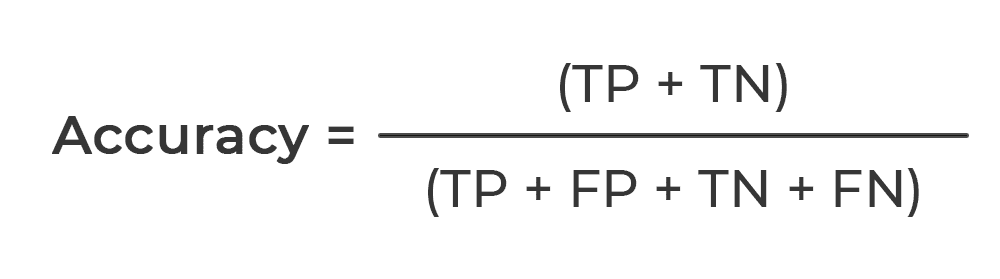

Classification Models

Some terms:

Accuracy: percent of cases classified as correct

Confusion matrix: the tabular display of record count by classification and predicted status

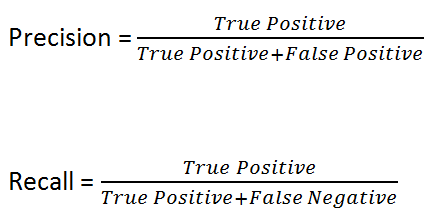

Sensitivity: percent of all 1s that are correctly classified as 1s (recall)

Specificity: percent of all 0s that are correctly classified as 0s

Precision: percent of predicted 1s that are 1s

ROC curve: plot of sensitivity vs specificity

Lift: a measure of a model of how good it is at identifying 1s at different probability cutoffs (lets you look at the consequences of setting up different probability cutoffs for records as 1s)

Accuracy is a measure of total error:

TruePositive + True Negative / TruePositive + FalsePositive + True Negative + FalseNegative (Sample Size)

The precision measures the accuracy of predicted positive outcomes.

The recall measures the strength of the model to predict a positive outcome.

Another metric is specificity which measures the model’s ability to predict negative outcomes:

specificity = TrueNegative / TrueNegative + FalsePostive

Imbalanced Data

Imbalanced data is a problem for classification algorithms. A strategy for working with imbalanced data is to undersample or oversample.

Some terms:

Undersample: using fewer class records in the classification model

Oversample: using more class records in the classification model

Up weight or down weight: attach more weight to the class in the model

Data generation: new bootstrapped record is different from its source

z-score: value that comes from standardization

K: number of neighbors consider in the nearest neighbor calculation

Undersampling will lead to small, more balanced data which could make it easier to prepare data and pilot models. The number of data depends on the application. Undersampling does have a risk of throwing away useful information, so instead of downsampling, you should oversample by drawing additional rows with replacements.

Machine Learning Section

K-Nearest Neighbors

K-Nearest Neighbors classifies a record by assigning it to a class that similar records belong to.

For each record to be classified/predicted:

Find K records that contain similar features

For classification, find the majority class among those similar records and assign that class to a new record

For prediction, find the average among those similar records and predict that average for the new record

Some terms:

Neighbor: a record that has similar predictor values to another record

Distance metrics: metric that is used to sum how far one record is from another

Standardization: subtract mean and divide by standard deviation (Normalization)

The distance metric is used to measure how far two records are from one another. One metric is Euclidean distance:

There are other measuring distances such as the Manhattan distance. Another one is Mahalanobis distance which is used for numeric data.

Choosing K is important for K-Nearest Neighbors. The simplest choice is to set K = 1, known as the 1-nearest neighbor classifier. This is the best choice.

If K is too low, it’ll be overfitting.

If K is too high, we’ll over smooth the data.

The best balance is determined by accuracy metrics. But it depends on the nature of the data.

Tree Models

Tree models also called, classification and regression trees, decision trees, or trees, are popular and effective classifications. A decision tree will produce a set of rules to predict an outcome.

Some terms:

Recursive partitioning: dividing and subdividing data with the goal of making the outcome in each subdivision as homogeneous as possible

Split value: predictor value that divides records into those where the predictor is less than the split value, and those where it’s more

Node: graphical representation of split value

Leaf: end of a set of if-then rules, or branches of a tree

Loss: number of misclassification in the splitting process, more loss = more impurity

Impurity: mix of the class found in subpartition of data, more mix = more impure

Pruning: taking a grown tree and cutting its branches back to reduce overfitting

Tree models are just ‘if-then-else’ rules. Trees can discover hidden patterns corresponding to complex interactions in the data.

To construct a decision tree, use recursive partitioning.

If a fully grown tree contains overfit data it must be pruned back so that it captures the signal and not noise.

The random forest (multiple-tree algorithms) can yield better predictive performance.

Bagging and the Random Forest

Some terms:

Ensemble: form a prediction using a collection of models (model averaging)

Bagging: form collection of models by bootstrapping data

Random forest: constructing multiple decision trees

Variable importance: measure the importance of the predictor variable in the performance of the model

Ensemble models provide a good predictive model with little effort. They’ll improve model accuracy by combining the results from a collection of models.

Random forest will help determine which predictor is important and can help discover complex relationships between predictors. The random forest does have hyperparameters which should be tuned using cross-validation to avoid overfitting. A useful output of random forest is a measure of variable importance.

There are two ways to measure variable importance:

By mean decrease in Gini impurity score for all nodes that were split on a variable

By decrease in accuracy of the model if values of the variable are randomly permuted

Boosting

Boosting is a technique for creating an ensemble of models. There are several variants of the algorithm that are used: gradient boosting, XGBoost, and AdaBoost.

Some terms:

Boosting: a technique to fit a sequence of models by giving more weight to record with a large residual for each round

Adaboost: reweights data based on residuals

Gradient boosting: cast in terms of minimizing a cost function (similar to Adaboost but instead of adjusting weights, gradient boosting fits models to a pseudo-residual)

XGBoost: Extreme gradient boosting algorithm (pretty popular and efficient software package)

Regularization: adding a penalty term to the cost function on the number of parameters in the model in order to avoid overfitting

Hyperparameters: Parameters that need to be tuned before fitting the algorithm

Any modeling technique is prone to overfitting. Overfitting can be avoided by a judicious selection of predictor variables.

Unsupervised Learning Section

Principal Components Analysis

Principal components analysis (PCA) is a technique to discover ways in which numeric variables covary. The idea in PCA is to combine multiple numeric predictor values and turn them into a smaller set of variables. Principal components are calculated to minimize correlation between components, thus reducing redundancy.

Some terms:

Principal component: a linear combination of predictor variables

Loadings: weights that transform predictors into components

Scree plot: plot of variances of components, showing the relative importance of components

If your goal is to reduce the dimension of data, you must decide how many principal components to select. It’s best to select components that explain ‘most’ of the variance. You can do this visually by using the scree plot.

K-Means Clustering

Clustering means dividing data into different groups, where records in each group are similar to each other. The point of clustering is to identify meaningful groups of data. K-means was the first clustering method to be created.

Some terms:

Cluster: a group of records that are similar

Cluster mean: vector of variable means for record in cluster

K: number of clusters

The K-mean algorithms require that you specify the number of clusters K. The number of desired clusters is chosen by you.

Hierarchical Clustering

Hierarchical clustering can yield different clusters. It will allow you to visualize the effect of specifying different clusters. Hierarchical clustering begins with every record in its own cluster.

Some terms:

Dendrogram: a visual representation of records

Distance: distance of how close one record is to another

Dissimilarity: distance of how close one cluster is to another

Hierarchical clustering doesn’t work that well with large data sets. Hierarchical clustering is mostly for small data sets.

The main algorithm for hierarchical clustering is the agglomerative algorithm, which merges similar clusters together.

Scaling Data

Unsupervised learning will require data to be scaled. Variables need to be transformed to similar scales if they were measured on different scales. We don’t want the scale to impact the algorithm.

Some terms:

Scaling: expanding data to bring multiple variables to the same scale

Normalization: subtracting the mean and dividing by standard deviation (one method of scaling)

Gower’s distance: bring all variables to the 0-1 range (often use in categorical and mixed numeric data)

The idea of Grower’s distance:

Distance is calculated as the absolute value of the difference between two records (for numeric variables and ordered factors)

If two records are different the distance is 1. If two records are the same, the distance is 0.

[End]

![How it works... - Python Machine Learning Cookbook - Second Edition [Book] How it works... - Python Machine Learning Cookbook - Second Edition [Book]](https://substackcdn.com/image/fetch/$s_!hHYu!,w_1456,c_limit,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2F95af9c3b-8274-4962-bb1f-b036fec03691_2180x600.png)