A General Guide to Machine Learning (Part One)

Machine learning is about building algorithms that are useful. They rely on a collection of examples of some phenomenon. Machine learning can also be defined as a process of solving a problem by gathering a dataset, and then building a statistical model based on the dataset. This model will then be used to solve the problem.

Types of Learning

Learning can be supervised, semi-supervised, reinforcement, and unsupervised.

Supervised Learning

In supervised learning, the dataset is a collection of labeled examples:

Each element x_i is called a feature vector. A feature vector is a vector in which each dimension contains a value that describes the example. That value is called a feature. The label y_i can be either an element belonging to a finite set of classes, a real number, or a more complex structure.

The goal of supervised learning is to use the dataset to produce a model that takes a feature vector x as input and outputs information.

Unsupervised Learning

In unsupervised learning, the dataset is a collection of unlabeled examples:

X is the feature vector, and the whole point of unsupervised learning is to create a model that takes a feature vector x as input and either transforms it into another vector or into a value that can be used to solve a problem.

Semi-Supervised Learning

In semi-supervised learning, the dataset contains both labeled and unlabeled examples. Normally, the quantity of unlabeled examples is much higher than labeled examples. Semi-supervised learning has the same goal as supervised learning. The hope is to use many unlabeled examples to find a better model.

Reinforcement Learning

Reinforcement learning is where the machine is capable of perceiving the state of the environment as a vector of features. The machine can execute actions in every state. Different actions bring different rewards and could also move the machine to another state of the environment. The goal of this is to learn a policy.

A policy is a function that takes the feature vector of a state as input and outputs an optimal action to execute in that state. The action is useful if it maximizes the expected average reward.

How Supervised Works

Supervised learning starts with gathering the data. The data is a collection of pairs (inputs, outputs). Input can be anything such as messages, pictures, etc. Outputs are usually real numbers or labels such as “cat”, “turtle”, “dog”, etc. In some cases, outputs are vectors, sequences, or have some other structure.

Let’s say you want to use supervised learning for spam detection. You can gather data by sorting a bunch of email messages and labeled each one as “spam” or “not_spam”. Now you can convert each email message into a feature vector.

A common way to convert a text into a feature vector, called bag of words, is to take a dictionary of English words and stipulate that in our feature vector.

Some algorithms require transforming labels into numbers. A way to illustrate supervised learning is to use a support vector machine (SVM). This algorithm requires that the positive label(in our case it is “spam) has a numeric value of +1(one), and the negative label (“not_spam”) has a value of -1(minus one).

Once you have done this, you can apply the learning algorithm to the dataset in order to get the model.

SVM sees every feature vector as a point in a high-dimensional space. The algorithm puts all feature vectors on an imaginary 20,000-dimensional plot and draws a hyperplane that separates examples with positive labels from examples with negative labels. The boundary separating the examples of different classes is called the decision boundary.

The equation of the hyperplane is given by two parameters, a real-valued vector w of the same dimensionality as our input feature vector x, and a real number b like:

where wx means:

and D is the number of dimensions of the feature vector x.

The predicted label for some input feature vector x is:

where sign is a mathematical operator that takes any value as input and returns +1 if the input is a positive number or -1 if the input is a negative number.

The goal is to leverage the dataset and find the optimal values for parameters w and b.

SVM can also incorporate kernels that can make the decision boundary non-linear. In some cases, it may be impossible to perfectly separate the two groups of points because of noise in the data, or because outliers exist. You should remember that any classification learning algorithm that builds a model implicitly or explicitly creates a decision boundary. The decision boundary can be straight, curved, or have a complex form. The form of the decision boundary determines the accuracy of the model.

Notation

A scalar is a simple numerical value, like 19 or -2.57. Variables that take scalar values are denoted by an italic letter.

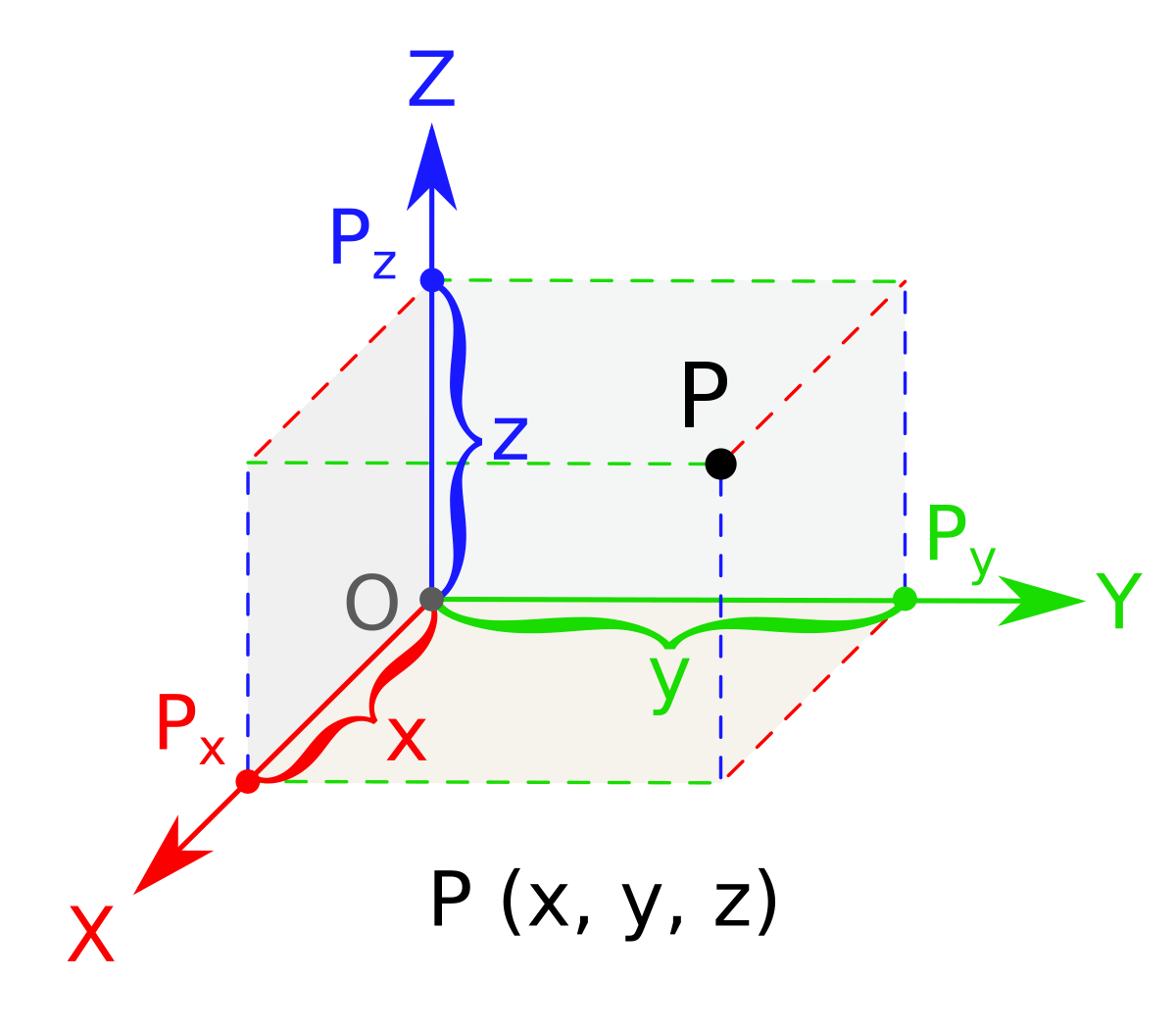

A vector is an ordered list of scalar values, called attributes. We denote vectors as bold characters. Vectors can be visualized as arrows that point to some directions as well as points in a multi-dimensional space. We denote an attribute of a vector as an italic value with an index:

The index k denotes a specific dimension of the vector. Don’t confuse this with the power operator.

A matrix is a rectangular array of numbers arranged in rows and columns. Matrices are denoted with bold capital letters, such as A or W.

A set is an unordered collection of unique elements. A set of numbers can be finite. If a set includes all values between a and b, including a and b, it is denoted using brackets such as [a,b]. If the set doesn’t include the values a and b, it’s denoted as (a,b).

Derivative and Gradient

A derivative is a function or a value that describes how fast f grows. If the derivative is a constant value then the function grows (or decreases) constantly at any point x of its domain.

The process of finding a derivative is called differentiation.

For example if:

then

If the function is not basic, we can find its derivative using the chain rule. For example, if F(x) = f(g(x)), where f and g are some functions, then F’(x) = f’(g(x))g’(x). For example if F(x) = (6x + 1)^2 then g(x) = 6x + 1 and f(g(x)) = (g(x))^2. By applying the chain rule, we find:

Gradient is the generalization of derivatives for functions that take several inputs. A gradient of a function is a vector of partial derivatives.

Random Variable

A random variable is a variable whose possible values are numerical outcomes of a random phenomenon. There are two types of random variables: discrete and continuous.

A discrete random variable takes on only a countable number of distinct values such as green, yellow, blue, or 1,2,3.

The probability distribution of a discrete random variable is described by a list of probabilities associated with each of its possible values. This list is called a probability mass function. Each probability in a probability mass function is a value greater than or equal to 0. The sum of probabilities equals 1.

A continuous random variable takes an infinite number of possible values in some interval. Examples include weight and height. Because the number of values of a continuous random variable X is infinite, the probability Pr (X = c) for any c is 0. The probability distribution of continuous random variables is described by a probability density function.

Parameters vs Hyperparameters

A hyperparameter is a property of a learning algorithm usually having a numerical value. That value influences the way the algorithm works. Hyperparameters aren’t learned by the algorithm itself from data.

Parameters are variables that define the model learned by the learning algorithm. Parameters are directly modified by the learning algorithm based on training data.

Classification vs Regression

Classification is a problem of automatically assigning a label to an unlabeled example.

The classification problem, in machine learning, is solved by a classification learning algorithm that takes a collection of labeled examples as inputs and produces a model that can take an unlabeled example as input and either directly output a label or output a number that can be used to deduce the label.

In a classification problem, a label is a member of a finite set of classes. If the size of classes is two, it’s called binary classification. Multiclass classification is when a classification problem has three or more classes.

Regression is a problem of predicting a real-valued label given an unlabeled example. The regression problem is solved by a regression learning algorithm that takes a collection of labeled examples as inputs and produces a model that can take an unlabeled example as input and output a target.

Model-Based vs Instance-Based Learning

Most supervised learning algorithms are model-based. Model-based learning algorithms use the training data to create a model that has parameters learned from the training data.

Instance-based learning algorithms use the whole dataset as the model. One instance-based algorithm is k-Nearest Neighbors (kNN). kNN algorithm looks at the close neighborhood of the input example in the space of feature vectors and outputs the label that it saw the most often in this close neighborhood.

Shallow vs Deep Learning

A shallow learning algorithm learns the parameters of the model directly from the features of the training examples. Most supervised learning algorithms are shallow. The exception is neural network learning algorithms. In deep neural network learning most model parameters are learned not directly from the features of the training examples, but from the outputs of preceding layers.

Learning Algorithm

Each learning algorithm consists of three parts:

a loss function

an optimization criterion based on the loss function

an optimization routine leveraging training data to find a solution to the optimization criterion

When reading literature on machine learning, you’ll often encounter references to gradient descent or stochastic gradient descent. These are the most frequently used optimization algorithms where the optimization criterion is differentiable.

Gradient descent is an iterative optimization algorithm for finding the minimum function. They can be used to find optimal parameters for linear and logistic regression.

[End of Part One]